|

output-folder where you want to save your file. save-crawl saves your data to a ospider. headless is required for command line processes. Below are required to accomplish a basic example. Screamingfrogseospider -crawl -headless -save-crawl -output-folder ~/crawls-2023wk08 -timestamped-output Since we're working in headless mode, we'll want to disable the embedded browser. If you use a database instead of in-memory, add this to nfig.

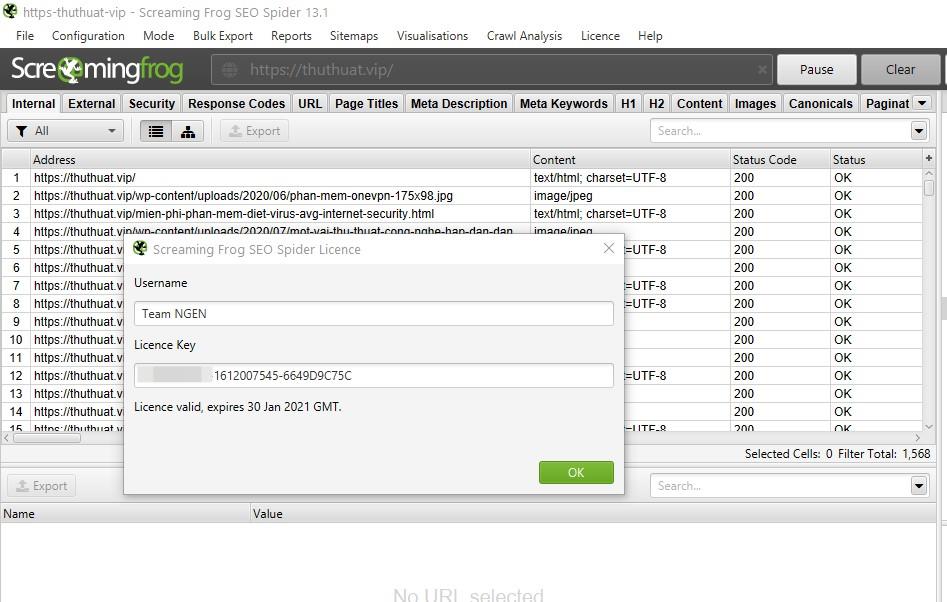

Your default mode is in-memory, but you might want to add a database file if you're dealing with stats like these. If you're unsure of your available memory, try this command. Suppose you want to increase your memory to 8GB. If you want to change the amount of memory, you want to allocate to the crawler, then create another configuration file. screaming_frog_usernameĬreate a new nfig file within the same directory. sudo nano ~/.ScreamingFrogSEOSpider/licence.txt

I've included a diagram below to see other common places to use within the Ubuntu file directory.*Ĭonfigure Add your paid license in headless mode.Ĭreate a new license.txt file within a hidden directory called. If you're unsure where to download your package, you can always use /usr/local/bin.Sudo dpkg -i /path/to/download/dir/screamingfrogseospider_18.2_all.deb Visit Screaming Frog's Check Updates page to identify the latest version number. Command line crawling with Screaming Frog SEO Spider.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed